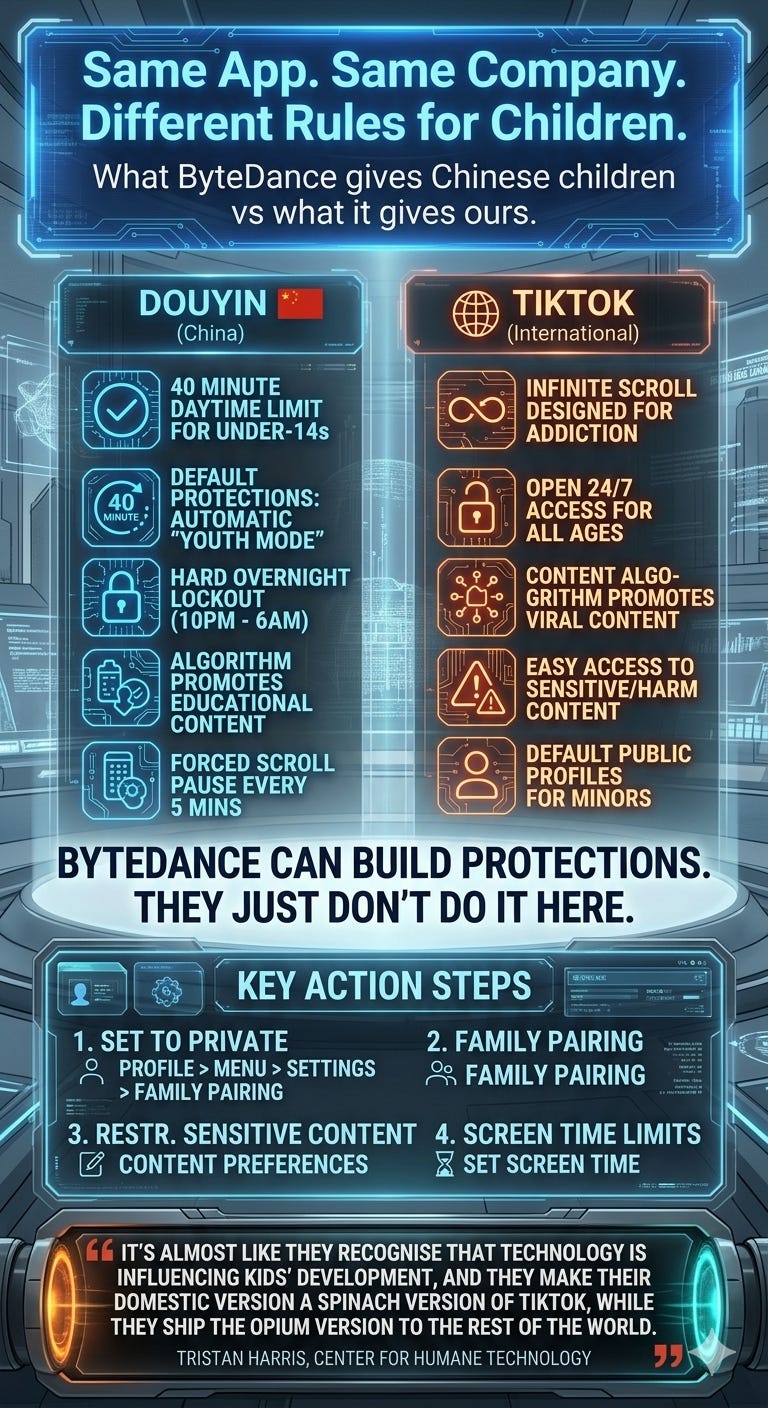

ByteDance Protects Chinese Children. So Why Doesn't It Protect Ours?

The same company. The same app. Two completely different versions, one for Chinese children, one for the rest of the world.

And when you look at what Chinese children actually get compared to what other children get, I’ll be honest with you, it concerns me a great deal.

Let me explain.

Two Apps. One Company. Very Different Rules.

The TikTok your child uses was made by a Chinese company called ByteDance. What you might not know is that ByteDance also runs a completely separate version of the same app inside China, called Douyin.

Douyin launched in 2016. TikTok came a year later, built on the same foundations, for the rest of the world.

They look almost identical. Same logo. Same short-form video format. Same underlying algorithm. Same parent company writing the cheques.

But when it comes to children? They are worlds apart.

What Chinese Children Actually Get

In China, any child under 14 who opens Douyin is automatically placed into something called Youth Mode. Not as an opt-in. Not as something a parent has to go hunting for in a settings menu. It switches on by default.

Here’s what that means in practice.

The daily time limit is 40 minutes. Hard stop. No override. The app simply closes. And children cannot access it at all between 10pm and 6am. There is an overnight lockout built into the platform itself.

The algorithm doesn’t serve those children entertainment-led content. It serves them science experiments, museum exhibits, historical explainers, and educational material. There is even a five-second forced pause between videos, deliberately designed to interrupt the addictive scroll loop, with prompts that say things like “put down the phone” and “go to bed.”

Parents can also specify the types of content they want their child to see, which gives the algorithm direction based on what the family actually values.

Read that back. A five-second pause, a message telling your child to go to bed, is built into the app.

What ByteDance Gives Our Children Instead

No default Youth Mode. No automatic time limit. No overnight lockout.

TikTok introduced a 60-minute default daily limit for under-18s back in 2023, but teenagers aged 13 to 18 can override that limit themselves. Without any parental involvement. The algorithm is the same one that serves adults. And we already know what that algorithm does, because research has shown it can serve self-harm and eating disorder content to newly registered teen accounts within minutes.

That is not a system designed with your child’s well-being in mind.

“Spinach for Chinese Kids. Opium for Ours.”

The quote that captures this best came from Tristan Harris, a former Google employee and co-founder of the Centre for Humane Technology, speaking on the US programme 60 Minutes. He described ByteDance as making what he called a “spinach version” of the app for Chinese children, while shipping what he called the “opium version” to the rest of the world.

It’s a brutal framing. But it’s not far wrong.

Now, the Honest Part

I want to be straight with you here, because I think credibility matters more than a clean narrative.

Douyin’s child protections are not some act of corporate virtue. They exist because the Chinese government demands them. The Chinese political system allows authorities to move quickly, and ByteDance has been under sustained regulatory pressure since at least 2021 when China’s Cyberspace Administration cracked down on what it called “unhealthy fan culture” and pushed platforms to promote content that aligns with what it describes as “mainstream values.”

That is not a model anyone in this country should want to copy wholesale. Douyin’s version of content moderation also removes LGBTQ+ content, restricts political speech, and enforces cultural censorship that most of us would find deeply troubling.

There is also a surveillance angle. To enforce age limits in China, every Douyin account is linked to a user’s real identity, with facial recognition used to monitor livestream creation. The privacy implications of that are significant.

So no. I’m not holding China up as a gold standard. I’m not suggesting Ofcom should demand facial recognition or state-controlled content filtering.

What I am saying is this.

ByteDance knows exactly how to build child-protective features into this platform. They’ve proven it. The technology exists. The capability exists. The only thing that changes between China and the UK is whether the law demands it.

And that is a corporate accountability story, not a geopolitical one.

The Real Question

One of the clearest insights I’ve read on this comes from researchers who studied both platforms. Their conclusion was straightforward: companies are reluctant to make changes before being required to by law. ByteDance behaves no differently from Silicon Valley’s biggest players in that regard.

So the question for us in the UK is simple.

We have the Online Safety Act. We have Ofcom as our regulator with real enforcement powers. Ofcom’s Protection of Children Codes came into force in July 2025. Major platforms including TikTok have introduced age verification. That is a start.

But age verification is only step one.

The next step, and this is where I want to see Ofcom push hard, is algorithmic accountability. Because knowing how old a user is and then continuing to serve them the same content as an adult is not child safety. It’s a box-ticking exercise.

If ByteDance can build a version of this algorithm that pushes educational content and cuts off access at bedtime when the Chinese government tells them to, they can do it here. They choose not to because nobody has made them.

That needs to change.

⚡Please don’t forget to react & restack if you appreciate my work. More engagement means more people might see it. ⚡

What You Can Do Right Now

While we wait for regulators to catch up, here’s what I’d encourage you to do this week if your child is on TikTok.

TikTok does have a tool called Family Pairing, and it’s genuinely useful when it’s set up properly. The problem is that most parents don’t know it exists. Here’s how to find it:

Go to your child’s profile, tap the menu in the top right corner, go to Settings, and look for Family Pairing. From there you can link your account to theirs, set content filters, apply screen time limits, and restrict or disable direct messages for under-16s.

While you’re in there, set the account to private. Disable location sharing. Set who can Duet or Stitch with your child’s content to Friends only.

These settings don’t make TikTok safe. But they close the most obvious doors.

And have the conversation with your child about why you’re doing it. Not as a punishment. Not as surveillance. As a family decision about how much of their attention they want to hand over to an algorithm that was not built with their interests in mind.

The Bottom Line

ByteDance built a safer version of TikTok because China’s government told them to.

Our children deserve the same standard. Not because of geopolitics. Not because China is doing anything right. But because the technology to protect children exists, the company that owns it has proven they can deploy it, and the only reason our children don’t have it is that nobody has demanded it loudly enough yet.

Ofcom, the Online Safety Act, and everyone reading this, that is the work still in front of us.

If this has been useful, please share it with someone who needs to read it. And if you want to explore TikTok’s Family Pairing settings in more detail, I’ll be putting together a full walkthrough guide shortly.

If you or someone you know needs support right now, Childline is available 24 hours a day, 7 days a week. Call 116 000 or visit childline.org.uk. It’s free, it’s confidential, and it’s there for every child in the UK.

Stay safe out there.

As always, thank you for your support. Please share this across your social media, and if you do have any comments, questions, or concerns, then feel free to reach out to me as I am always happy to spend some time helping to protect children online.

Remember that becoming a paid subscriber means supporting a charity that is very close to my heart and doing amazing things for people. Childline, I will donate all subscriptions collected every six months, as I don’t do any of this for financial gain.

Sources:

Centre for Humane Technology / 60 Minutes (Tristan Harris, 2022), MIT Technology Review, ABC News, Euronews Fact Check (Nov 2025), South China Morning Post, China-Britain Business Council / CBBC Focus, Internet Matters (2025), ICO (2025), Ofcom.