Instagram Just Announced A New Safety Feature

Here's What They Are Not Telling You

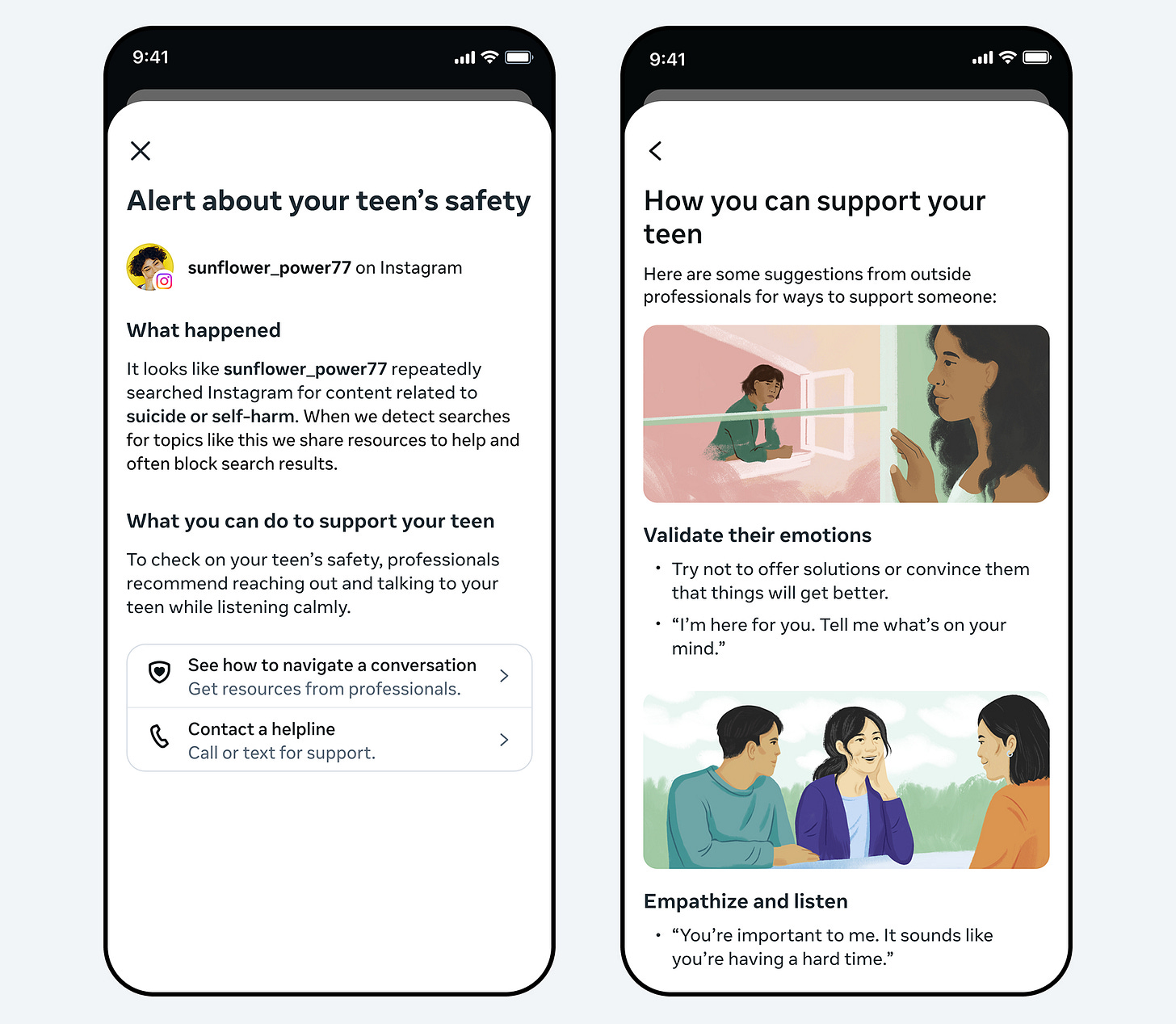

This week, Instagram announced something that1, on the surface, sounds like a genuine step forward for child safety. They’re rolling out a new feature that will alert parents if their teenager repeatedly searches for terms related to suicide or self-harm, things like phrases promoting self-harm, or simply the words “suicide” or “self-harm.”

The notifications will be sent to parents via the app, email, text, or WhatsApp, and will include links to expert resources to help you start a conversation with your child. They’re launching in the UK, US, Australia and Canada in the coming weeks, with more countries to follow later this year.

If you’re a parent, your first instinct might be relief as they are actually doing something now.

I want you to consider that reaction for a minute, because I think it deserves a bit of a deeper dive.

⚡Please don’t forget to react & restack if you appreciate my work. More engagement means more people might see it. ⚡

First — how does it actually work?

Before anything else, you need to understand what this feature does and doesn’t do.

The notifications will only reach you if you’re already enrolled in Instagram’s Parental Supervision feature. That requires both you and your child to set it up together. If you haven’t done that yet, this feature won’t reach you at all, but as I have suggested in the past, this is something you should do.

Once set up, Instagram says the alerts won’t trigger on a single search. They’ll fire if your child repeatedly searches for these terms within a short period of time, this is what Instagram describes as “a few searches.” The idea, they say, is to avoid unnecessary distress to parents whilst still erring on the side of caution.

So, what to expect if an alert arrives, you’ll get an overview of what’s happened and resources to help you have that conversation. Instagram also says the searches themselves are already blocked, so your child isn’t being served this content. They’re being redirected to crisis helplines and support resources instead.

That part, in isolation, is not nothing and would be a huge stride forward in protecting children online. A child repeatedly trying to access self-harm content is a child who may be struggling. A parent being made aware of that is a parent who can help them.

But, and this is the part I really need you to hear, the headline hides several things that matter hugely.

The opt-out that Instagram isn’t shouting about

Here’s what I haven’t seen in most of the coverage this week.

Instagram’s Teen Accounts, the framework that these new notifications sit within, work like this - children under 16 need a parent’s permission to change any of the built-in protections to be less strict. That’s the rule. Under 16, your child cannot unilaterally opt out of parental supervision.

At 16, that changes.

A 16-year-old on Instagram can modify their own supervision settings without your approval unless they have invited you to supervise the account. Which means a 16-year-old who doesn’t want you receiving these alerts can, in theory, remove parental supervision from their account entirely, and you won’t be told they’ve done it.

Think about that. The children most likely to be searching for this content are teenagers. And a significant number of those teenagers are 16 or 17. They are old enough, under Instagram’s rules, to quietly switch off the very mechanism that would have let you know they were struggling.

I’m not saying every 16-year-old will do this. Most likely won’t. But the ones who are most at risk, who most need you to know, are often the ones most likely to hide it.

Let’s talk about what Meta isn’t doing

I’ve spent over a decade working in cybersecurity and policing. I’ve investigated some of the darkest content available on the internet professionally. I understand better than most what these platforms are technically capable of, and more importantly what they choose not to do.

Meta has the ability to limit the reach of harmful content. They have the algorithmic power to stop serving self-harm and eating disorder content to vulnerable teenagers. TikTok’s algorithm, as I’ve written about before, has been shown to serve self-harm content to newly registered teen accounts within minutes. Instagram’s recommendation engine is no different in principle.

Meta knows this. Their own internal research, leaked and documented2, showed that Instagram knew it was harmful to teenage girls. They continued anyway. Right now, Meta faces a major trial in California3 where plaintiffs allege the company pursued growth at all costs while ignoring the documented impact on children’s mental health. Both Mark Zuckerberg and Instagram’s head Adam Mosseri have already faced questioning in court.

So when I see a feature that puts the burden of notification back on parents, that requires you to set up a tool, stay enrolled, hope your child doesn’t opt out, and then know what to say when the alert arrives, I ask myself a simple question.

Why is the responsibility landing on you?

Meta has the power to reduce the volume of harmful content that reaches children in the first place. This feature doesn’t do that. It watches what your child searches for and then tells you about it, if you have setup the capability. The content is still there, the algorithm is still there and the design that makes these platforms addictive for young people is still there.

This is not child safety, this is the appearance of child safety, carefully timed, I might add, at a moment when the political pressure on Meta has never been higher, and the UK Government are actively considering a social media ban for those under 16!

The bigger picture: the UK is watching

In January 2026, the House of Lords passed an amendment to the Children’s Wellbeing and Schools Bill4 by 261 votes to 150, which would effectively ban social media for under-16s in the UK. The Government has launched a three-month consultation on children’s social media use, with options including overnight curfews, restrictions on addictive design features like infinite scrolling, and raising the digital age of consent from 13 to 16.

Australia has already implemented an under-16 ban and Meta removed 544,000 accounts in the first month of Australian enforcement alone.

The platforms know what’s coming. They are watching governments move closer to legislative action, and they are doing what large corporations do when faced with regulation, they are producing announcements that look like action, that generate positive headlines, and that allow them to point at a list of features when politicians ask what they’re doing to protect children, or as I like to call it, using smoke and mirrors with a bit of sleight of hand!

I’m not saying this feature is worthless. I genuinely believe that any tool that helps a parent identify a child in crisis has value. But I won’t let that value be used as a smokescreen for everything that isn’t changing.

What you should actually do

If you have a teenager on Instagram, regardless of how you feel about this announcement, here is what I’d ask you to do today.

Set up Parental Supervision on Instagram if you haven’t already. Go to Settings → Supervision and follow the steps to link your account to your child’s. This is the feature the new notifications sit within; without it, you won’t receive them.

As always though, my best advice is to have an honest conversation about why you’re doing it. You aren’t spying on them, it isn’t meant as a punishment. But it is because you love them and you want to know if they’re struggling so you can protect them and give them the help they need, because you’d rather they came to you than searched for answers on a platform that doesn’t care about them the way you do.

If your child is 16 or over, talk to them directly about the opt-out. Explain what the feature does, and ask them directly to keep it enabled. Make it a conversation, not an order.

As always, keep an eye out for the signs that don’t require an algorithm for you to spot. Withdrawal, sleep disruption, changes in appetite, and reluctance to talk. A child in crisis will rarely announce it, but they almost always show it to the people who are paying attention.

A final thought

77% of UK children aged 9–17 experienced harm online last year. That’s up 8% in a single year. Meta’s announcement this week will do nothing to change that statistic.

What will change it though, are parents who are informed, engaged and unafraid to have difficult conversations, schools with safeguarding leads who understand the landscape and a government that holds platforms to account rather than accepting a press release as a substitute for accountability.

This feature is a breadcrumb. The children need the whole loaf.

⚡ If this resonates, please like and repost. Every share puts this in front of another parent or teacher who needs it. ⚡

If your child is struggling right now, please contact Childline on 0800 1111 — free, confidential, available 24/7. For parents and adults concerned about a child, the NSPCC helpline is 0808 800 5000.

As always, thank you for your support. Please share this across your social media, and if you do have any comments, questions, or concerns, then feel free to reach out to me here or on BlueSky, as I am always happy to spend some time helping to protect children online.

Remember that becoming a paid subscriber means supporting a charity that is very close to my heart and doing amazing things for people. Childline, I will donate all subscriptions collected every six months, as I don’t do any of this for financial gain.

https://about.fb.com/news/2026/02/new-meta-alerts-let-parents-know-if-teen-may-need-support/

https://www.congress.gov/117/meeting/house/114054/documents/HHRG-117-IF02-20210922-SD003.pdf

https://www.bbc.com/news/articles/c5y42znjnjvo

https://www.bbc.com/news/articles/cz0pnekxpn8o

Your article is making a significant difference for families. They will know how to protect their kids online.